Introduction

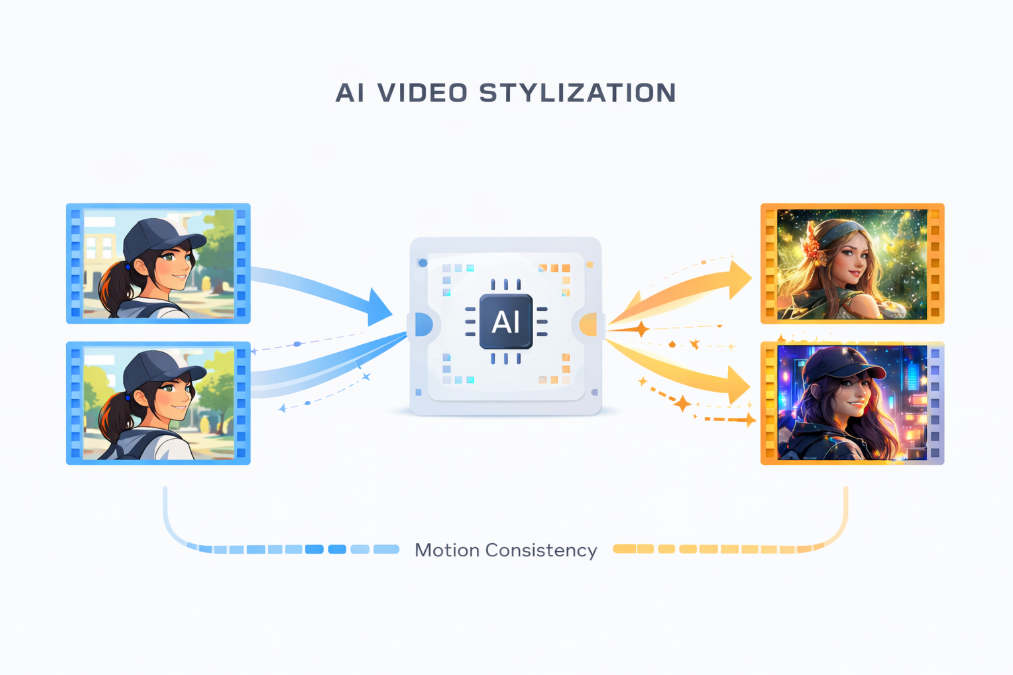

AI video stylization has rapidly evolved over the past few years. What started as basic frame-by-frame style transfer has grown into a new generation of diffusion-based video models capable of producing visually consistent, artistically rich videos. As interest in AI video generation, video style transfer, and text-to-video models continues to rise, understanding this evolution has become increasingly important for creators, developers, and researchers.

This article explores how AI video stylization has changed, why traditional approaches struggle with temporal consistency, and how unified video stylization models are shaping the future of creative video workflows. Rather than focusing on a single product or tool, this guide provides a broader technical and conceptual overview of the field.

What Is AI Video Stylization?

AI video stylization refers to the process of applying artistic styles—such as painting, animation, or cinematic aesthetics—to video content using machine learning models. Unlike simple filters, modern AI-based approaches aim to preserve:

Motion continuity

Object structure

Scene coherence

Early systems focused primarily on image style transfer, extending those techniques to video by processing frames independently. While visually impressive in still images, these methods often failed when applied to longer video sequences.

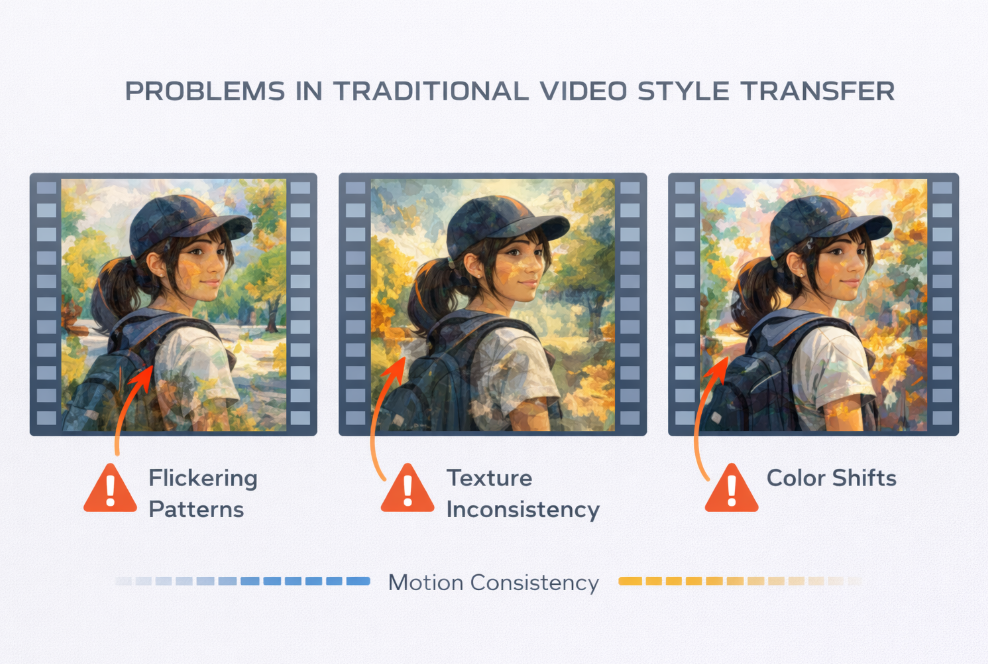

The Limitations of Traditional Video Style Transfer

Traditional video style transfer techniques typically apply a style to each frame separately. This approach introduces several well-known problems:

Flickering textures between frames

Inconsistent colors and patterns

Loss of object identity during motion

Poor scalability to longer videos

Because these models lack a strong temporal understanding, even minor changes between frames can result in visible artifacts. As a result, many early AI video stylization tools remained experimental and were rarely used in professional production environments.

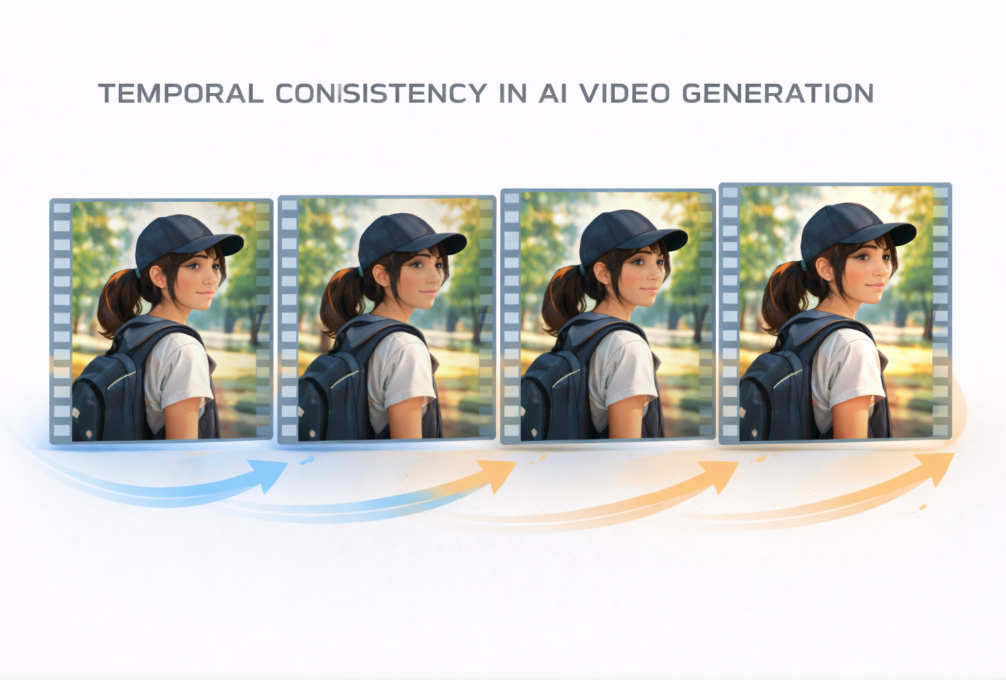

Why Temporal Consistency Matters in Video Generation

Temporal consistency is one of the most critical challenges in AI video generation. Human perception is highly sensitive to inconsistencies in motion and texture. Even subtle flickering can break immersion and reduce perceived quality.

For AI video stylization to be usable at scale, models must understand video not as a collection of images, but as a coherent temporal sequence. This requirement has driven the shift toward more advanced architectures, including video diffusion models and unified stylization frameworks.

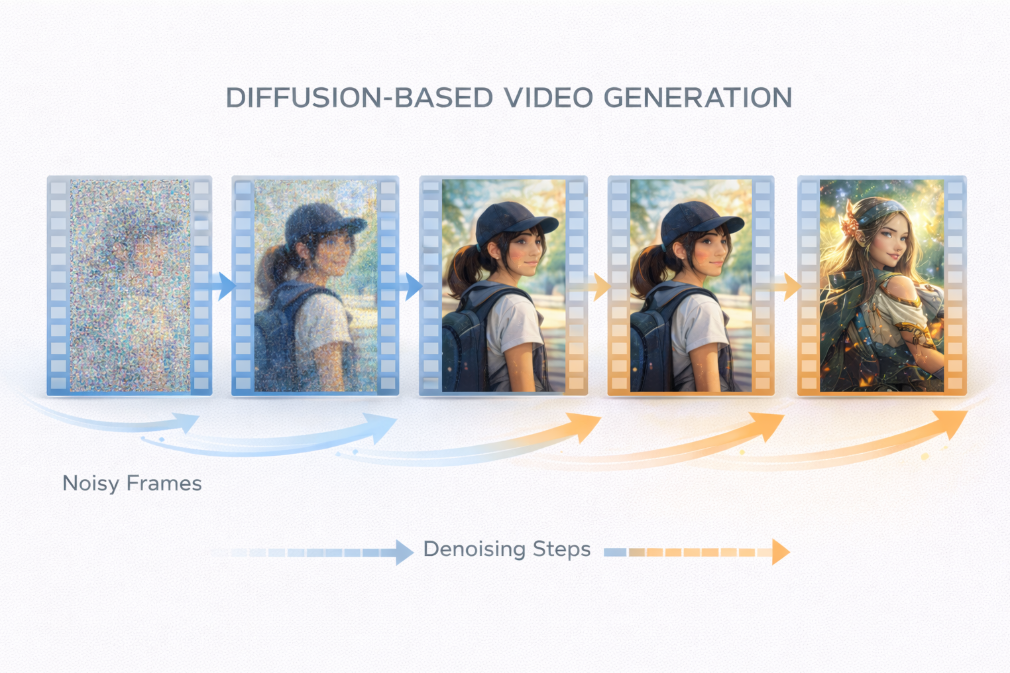

The Rise of Diffusion-Based Video Models

Diffusion models have transformed image generation, and their extension to video has unlocked new possibilities. In a diffusion-based approach:

Noise is gradually added to video frames

The model learns to remove noise step by step

Motion and structure are refined across time

When applied to video stylization, diffusion models allow style information to be integrated during generation rather than applied afterward. This leads to smoother transitions, more stable textures, and higher overall visual quality.

Diffusion-based video models now form the backbone of many next-generation AI video stylization systems.

Unified Video Stylization Models: A New Direction

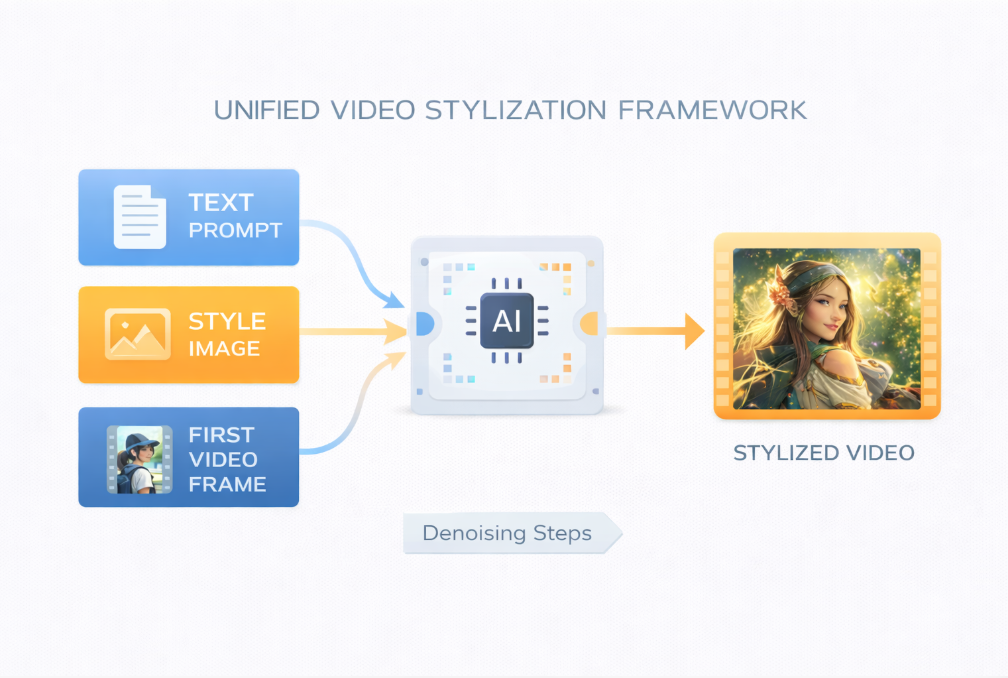

A key trend in modern AI video research is the move toward unified video stylization models. Instead of building separate systems for different inputs, unified models support multiple forms of guidance within a single architecture.

These guidance methods often include:

Text-guided video stylization

Image-guided video stylization

First-frame–guided video stylization

By unifying these approaches, models can adapt to different creative workflows without sacrificing stability or performance.

Text-Guided Video Stylization

Text-guided video stylization allows users to describe visual styles using natural language. For example:

“Cinematic lighting, cyberpunk city, neon reflections”

The model interprets the prompt and applies the corresponding aesthetic across all frames. This approach is especially popular in text-to-video AI systems, as it lowers the barrier to creative experimentation.

Text-guided stylization is widely used for concept exploration, rapid prototyping, and creative ideation.

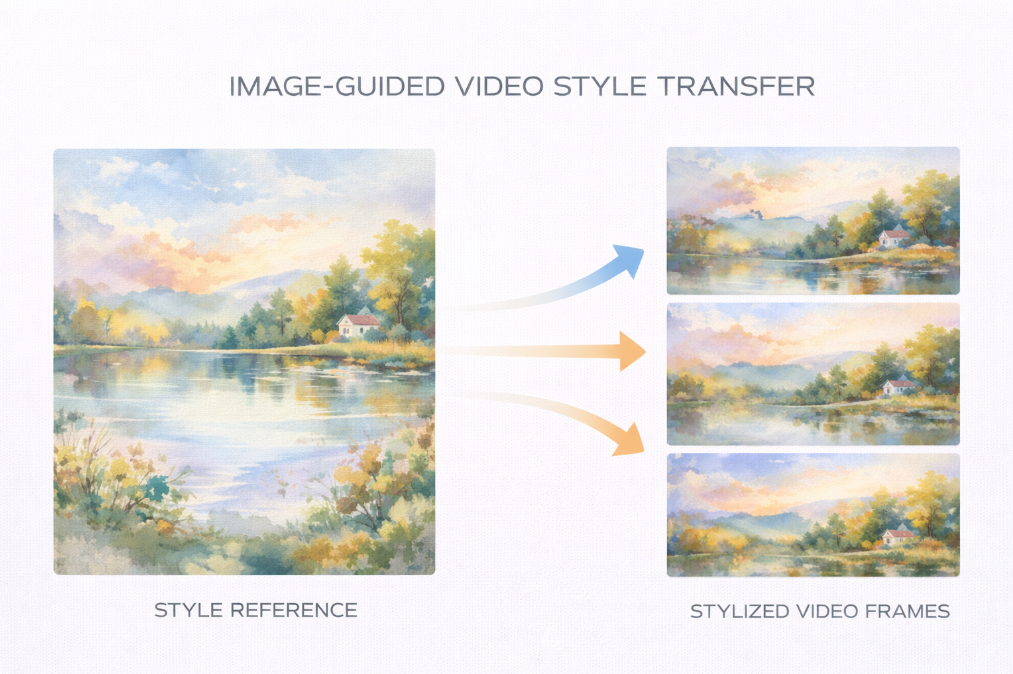

Image-Guided Video Stylization

In image-guided video stylization, a single reference image defines the artistic direction of the video. The model extracts visual features such as:

Color palettes

Brush strokes or textures

Lighting and contrast

This method is ideal for branding, design-driven storytelling, and projects where a specific visual identity must be preserved.

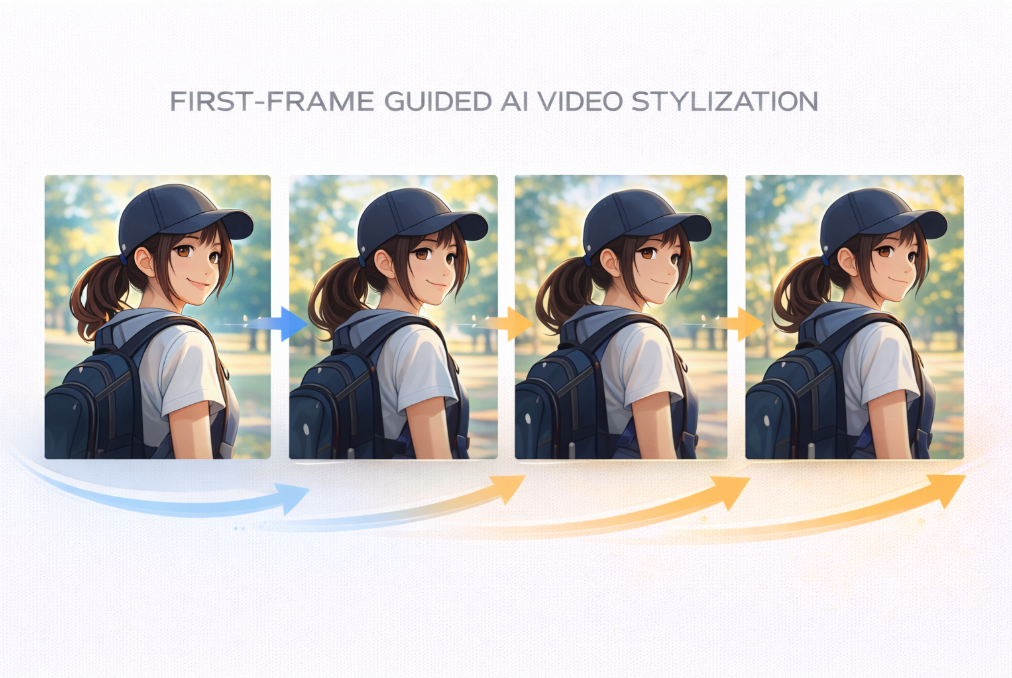

First-Frame–Guided Video Stylization

First-frame guidance offers maximum control over visual consistency. By stylizing the first frame manually, creators can ensure that characters, environments, and textures remain stable throughout the video.

This approach is commonly used in:

Animation pipelines

Anime-style video generation

Professional cinematic workflows

It significantly reduces style drift and improves overall coherence.

Practical Use Cases of Modern AI Video Stylization

Unified and diffusion-based video stylization models enable a wide range of applications, including:

Creative video editing

Advertising and marketing visuals

Music videos and artistic films

Game cinematics and trailers

Social media and short-form content

As these models mature, they are increasingly viewed as production-ready AI video tools rather than experimental research projects.

Challenges and Open Problems

Despite rapid progress, AI video stylization still faces important challenges:

Extremely long video sequences

Fast camera motion and scene cuts

Real-time or interactive stylization

Fine-grained control over multiple subjects

Ongoing research continues to address these limitations, pushing the boundaries of what AI-generated video can achieve.

Looking Ahead: The Future of AI Video Stylization

The future of AI video stylization lies in greater consistency, longer video generation, and tighter integration with creative tools. Unified models, diffusion-based architectures, and multimodal guidance are likely to become standard components of next-generation video systems.

As the field evolves, content-first platforms and research-driven projects play a critical role in defining best practices and setting expectations for quality and reliability.

Conclusion

AI video stylization has evolved from simple frame-based style transfer into a sophisticated field driven by diffusion models and unified video architectures. By addressing temporal consistency and offering flexible guidance methods, modern systems are making stylized video generation more practical, reliable, and expressive.

For creators and developers interested in the future of AI video generation, understanding these trends is essential. As research continues, unified video stylization models are poised to become a foundational technology for creative AI workflows.

To explore ongoing research and updates in this space, visit the

👉 DreamStyleAI website